Overview

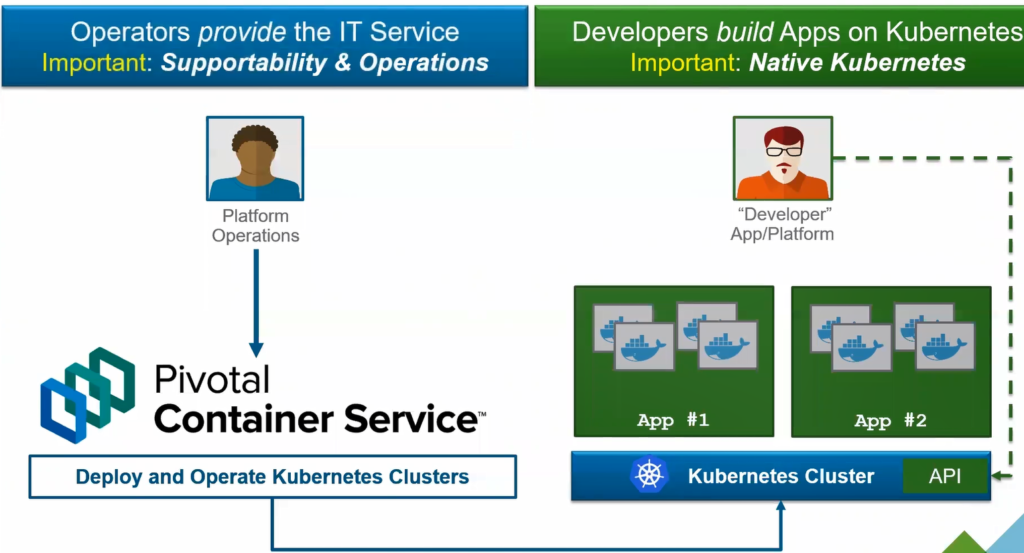

At its core, VMware Tanzu Kubernetes Grid Integrated Edition (formerly known as Enterprise PKS) is all about automatically provisioning and maintaining the infrastructure required to stand up a production grade Kubernetes (k8s) cluster. Once the base configurations are complete, infrastructure administrators will be able to easily deploy and operate production grade k8s clusters on-demand either for individual users or for multiple teams while allowing developers to interact with the same Kubernetes API that they are used to working with (illustrated below).

Why

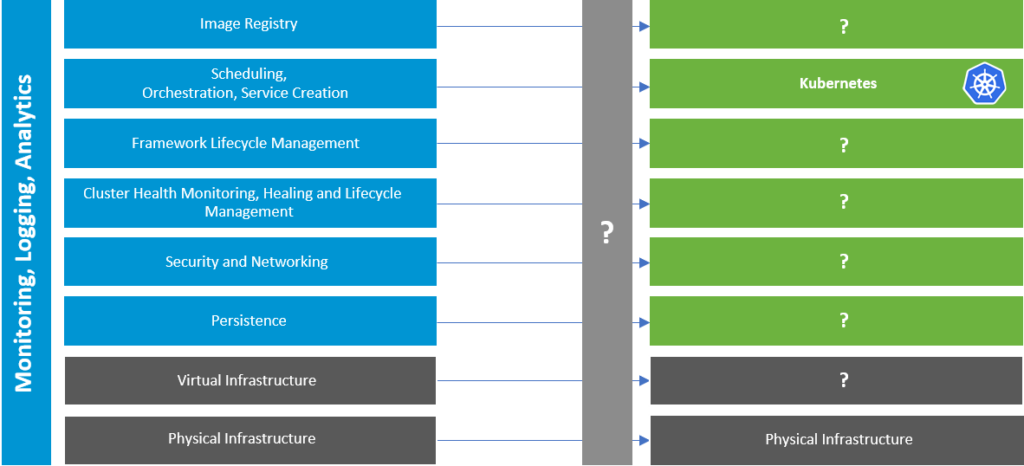

The decision to adopt container-based workloads in your production environment requires a serious investment, running k8s in production environment is not as straight forward as running it in your home/lab environment. Multiple solutions will be required and will have to work together to achieve this. The figure below shows a list of layers that a production grade k8s cluster would need to run a container-based production workloads. As you can see, Kubernetes is only one piece of the puzzle, how are you going to select these other pieces?

One way would be to manually choose from the wide range of products listed in the CNCF Landscape (https://landscape.cncf.io/) and try to fit these pieces into each of the layers above. There are a few problems with this approach:

- How are you going to determine which solutions to use and their interoperability with one another?

- How are you going to handle patching of these products?

- How are you going to handle day 2 operations such as scaling, health checks and lifecycle management of your k8s clusters? And the list goes on.

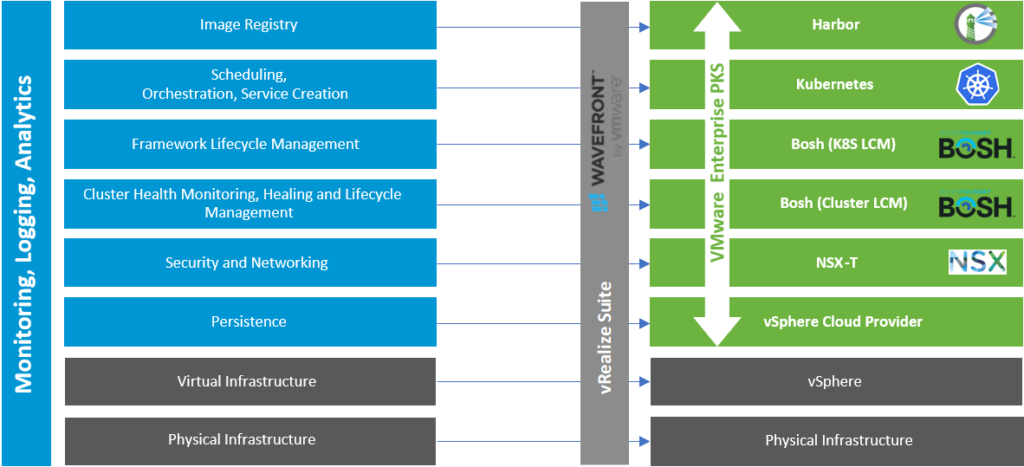

With TKGI, all of these solutions are pre-packaged and chosen for you (see figure below), ensuring their interoperability with one another as well as providing full support of these solutions. In the next section we will be taking a closer look at each of these layers.

TKGI Layers

Virtual Infrastructure (vSphere)

Many production environments today already run on vSphere, VMware’s server virtualization product suite which allows production workloads to run on VMs. In a k8s environment, your containers will be wrapped in pods, your pods in turn will be running on VMs. VMs will provide the base OS and resources that your pods require to operate, and will be able to leverage all the benefits that vSphere provides such as HA, vMotion, DRS etc.

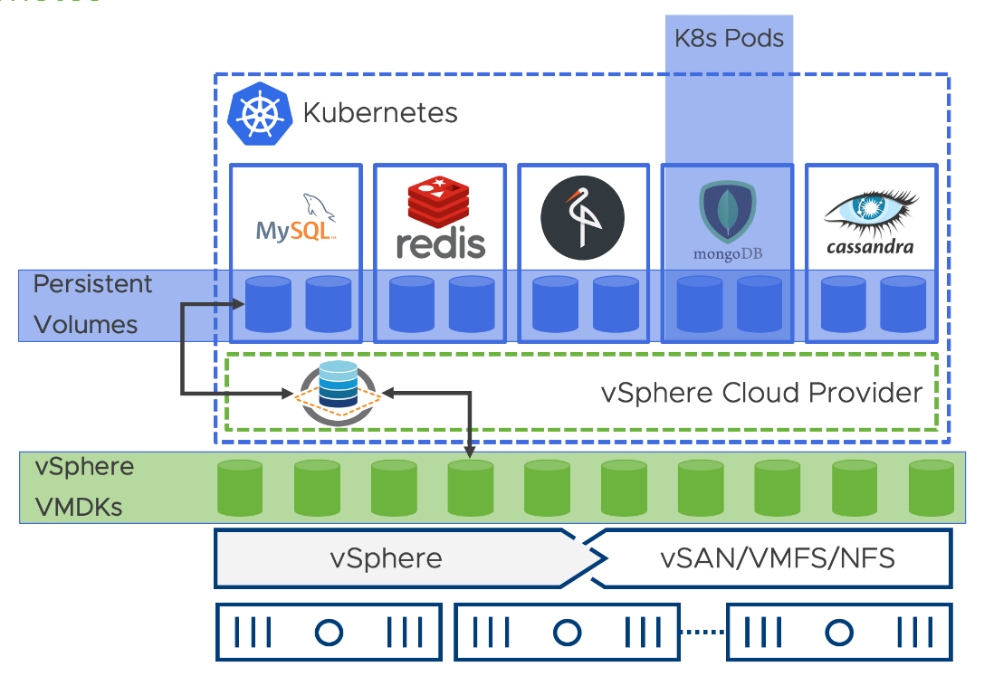

Persistent Storage (vSphere Cloud Provider)

Containers are ephemeral and stateless, once the container dies, all of its information is lost. Although 80% of your applications might be stateless, your backend applications (e.g. transactions, records) are not. Stateful applications require data persistence (i.e. mapping of stored data to a container) and hence a persistent storage is needed. As infrastructure admins, one of your responsibilities will be to determine a storage platform with the proper plugins to connect to your kubernetes environment (via Container Storage Interface). The next step would be to configure the cluster and all the nodes to be able to detect and use the storage platform. Not to mention the patching and testing required to keep up with the fast kubernetes changes.

With TKGI, using the vSphere Cloud provider plugin (see figure 3), your k8s clusters will be able to leverage native vSphere data stores for the persistent volumes and persistent volume claims defined in your YAML manifests without requiring specific information about the data store.

Networking and Security (NSX-T)

NSX-T is VMware’s network virtualization and security platform that will provide overlay network communications(logical switching, routing) and network services(NAT, LB) for both your VM and container workloads. Choosing NSX-T will eliminate the need for multiple solutions(E.g Flannel – L2, Calico – L3 and NGINX – LB etc.) and will provide you with end-end configuration and troubleshooting for your environment. Some features of NSX-T include:

- Namespaces: NSX-T builds a separate network topology per K8S namespace

- Pods: Every Pod has its own logical port on a NSX logical switch dedicated to its namespace

- Nodes: Every Node can have Pods from different Namespaces from different IP Subnets / Topologies

- Firewall: Every Pod has DFW rules applied on its Interface

- Routing: High performance East/West and North/South routing using NSX’s routing infrastructure

- Visibility: The full suite of NSX-T troubleshooting tools works for pod networking

- IPAM: NSX-T provides IPAM services enabling policy-based dynamic IP allocation for all Kubernetes components

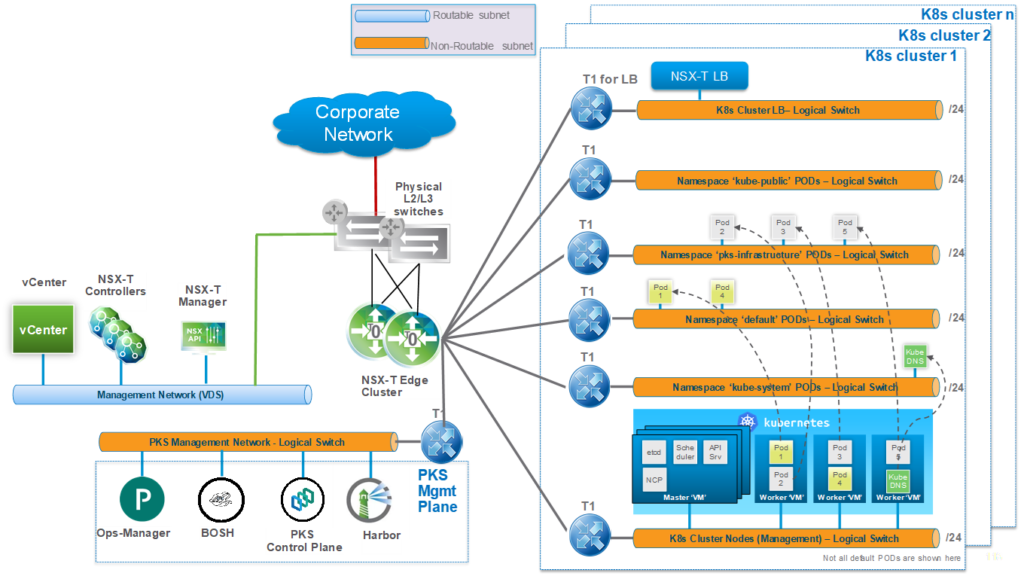

Figure below shows an example of a logical topology for a TKGI and NSX-T deployment. The NSX-T Container Plugin (NCP) running on the master nodes, provides a communication interface between the Kubernetes API server and NSX-T API. Allowing automatic network provisioning (router, switches, Load Balancers) based on changes reported by the K8S API server either for single or multi-tenant environments.

Cluster and K8S LCM (BOSH)

Kubernetes is in charge of monitoring the life cycle of our pods, but who monitors the life cycle of our kubernetes clusters? Ensuring that our cluster(s) nodes are in a healthy and updated state is of upmost importance as all your workloads will be running on them. In TKGI, BOSH is used as the brains behind deploying and managing day 2 life cycle operations of your clusters including:

- Cluster Deployment (Day 1)

- Cluster Health (Day 2)

- Cluster Scaling (Day 2)

- Cluster Patching and Upgrades (Day 2)

What is BOSH?

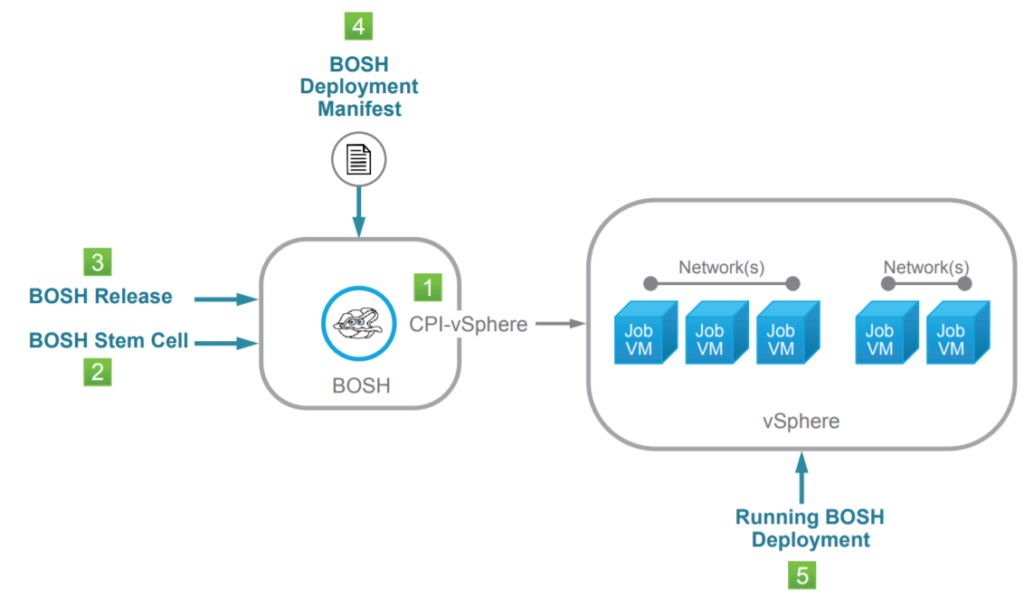

BOSH is essentially a tool that allows you to deploy and manage distributed applications (K8S clusters in TKGI) independent of the underlying infrastructure. To understand how it achieves this, lets take a closer look at the objects that interact with a BOSH controller (refer to the above figure).

- Cloud Provider interface (CPI) – allows BOSH to provision infrastructure objects (VMs, Storage, Networks) required for a given deployment, and with the proper CPI will be consistent across different cloud platforms (e.g. vSphere, AWS, Azure, GCP).

- Stem Cell – A Bare bone Linux virtual machine template, which BOSH uses to install the distributed applications on. The stem cell will also contain a BOSH agent, once the VM starts up, the agent will communicate with the controller for installing releases and to monitor the life cycle of the VM.

- Release – The software bundle(tgz) to be installed (i.e Kubernetes) or onto a VM built from a stem cell.

- BOSH Deployment Manifest – YAML file which describes what distributed application is to be deployed and will reference both the BOSH release, stem cell and related metadata(no of masters, worker nodes etc)

Kubernetes

Kubernetes is a container orchestrator and will help deploy, manage, update and scale your pods(i.e container workloads). With TKGI, developers will be able to interact with the same upstream K8S that they are used to working and ensured the latest stable release.

Harbor

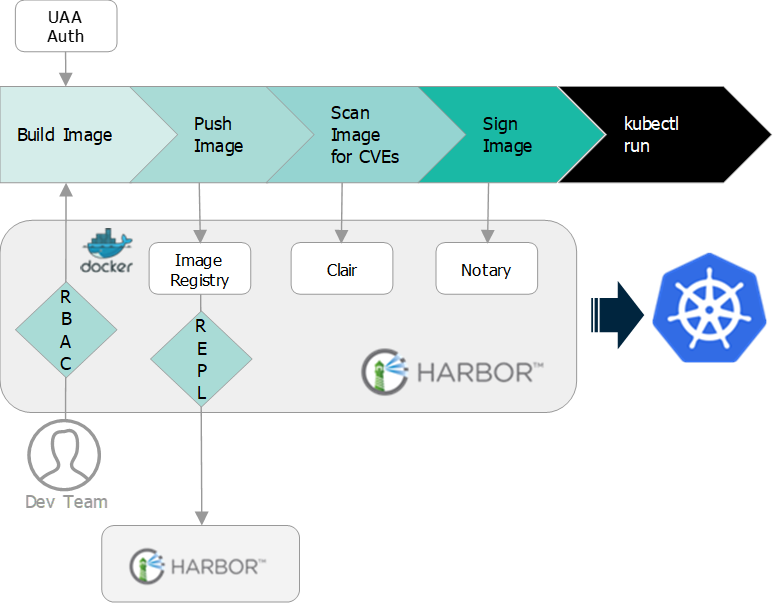

Harbor is an Enterprise Grade private container registry, providing your environment with a secure location to store and scan container images before using them in your production environment. Some features (and illustration) of Harbor listed below:

- Role-Based Access Control (RBAC)

- LDAP/AD Integration

- Image Vulnerability Scanning (Clair)

- Notary Image Signing

- Policy-Based Image Replication

- Graphical User Portal & RESTful API

- Image Deletion & Garbage Collection

- Auditing

Summary

VMware TKGI is a fully supported, production grade kubernetes platform and with deep integration with NSX-T, will provide your company with an end to end solution to run your container-based and VM production .